01 Hear Me Now M4a -

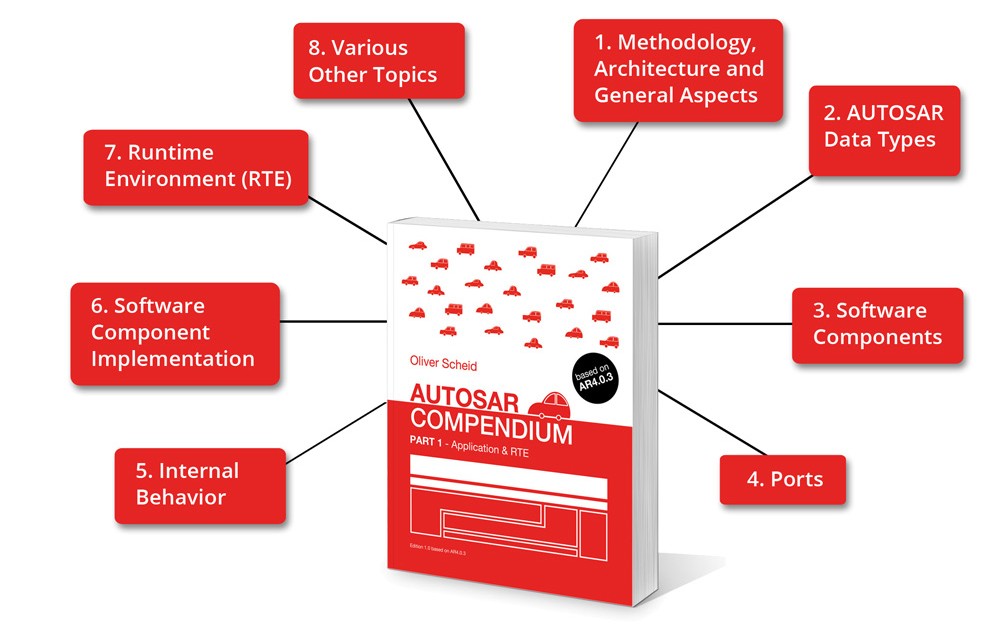

The AUTOSAR Compendium – Part 1 summarizes the first part of the AUTOSAR 4.0.3 specification, namely the Application Layer and the RTE. It is divided into 8 main topics:

- Different Methodology Views and Levels

- Main Types of Interfaces

- Application Layer

- Virtual Functional Bus (VFB) & Runtime Environment (RTE)

- Basic Software Layers and Stacks

- Concepts and Elements

- Application Data Types

- Implementation Data Types

- Data Prototypes

- Computation Method

- Unit

- Data Constraints

- Software Components Types and Prototypes

- Application SW-C

- Service SW-C

- Sensor/Actuator SW-C

- Ecu-Abstraction SW-C

- Complex Device Driver SW-C

- Service Proxy SW-C

- Nv-Block SW-C

- Port Prototypes

- Port Interfaces

- Communication Specification (ComSpec)

- Unconnected Ports

- Port Groups

- AUTOSAR Services

- Runnable Entity

- Interrunnable Communication

- Per Instance Memory (PIM)

- Service Dependencies / Service Needs

- Information on Implementation

- Mapping of Internal Behavior

- RTE Events

- RTE Generator

- Task and Task Mapping

- RTE ECU Configuration

- Mode Management

- ECU Abstraction

- Multicore

- Data Conversion

- Measurement & Calibration

- Variant Handling